Edge computing is ever stronger when converging with IoT, offering novel techniques for IoT systems. Multiple definitions of edge computing are found in the literature; however, the most relevant is presented in [3]. Authors define edge computing as a paradigm that enables technologies allowing computation to be performed at the edge of the network, on downstream data on behalf of cloud services and upstream data on behalf of IoT services. The proposed paradigm is a relatively new concept and due to the same nature, the term “edge computing” in literature may refer to all other architectures, such as MEC, cloudlet computing (CC), or fog computing (FC). However, we vision edge computing as a bridge between IoT things and the nearest physical edge device that aims to facilitate the deployment of the new emerging IoT applications in users' devices, such as mobile devices (see Figure 2.1).

Authors [12] in Figure 2.1, present the main idea of the edge computing paradigm by adding another device in the form of an edge device. Such a device can be referred to as a personal computer, laptop, tablet, smartphone, or another device capable of locally processing the data generated by IoT devices. Furthermore, depending on device capabilities, it may offer different functionalities, such as the capability of storing data for a limited time. In addition, an edge device can react to emergency events by communicating with the IoT devices and can aid other devices like cloudlet, MEC server, and cloud data center, by preprocessing and filtering the raw data generated by the sensors. In such scenarios, the edge computing paradigm offers processing near to the source of data and reduces the amount of transmitted data. Instead of transmitting data to the cloud or fog node, the edge device, as the nearest device to the source of the data, will do computation and response to the user device without moving data to the fog or cloud.

Edge computing is considered a key enabler for scenarios where centralized cloud-based platforms are considered impractical. Processing data near to the logical extremes of a network – at the edge of the network – reduces significantly the latency and bandwidth cost. By shortening the distance that data has to travel, this paradigm could address concerns also in energy consumption, security, and privacy [13]. However, the rapid adoption of IoT devices, resulting in millions of interconnected devices, are challenges that Edge Computing must overcome.

2.2.1 Edge Computing Architecture

The details of edge computing architecture could be different in different contexts. For example, Chen et al. [14] propose an edge computing architecture for IoT-based manufacturing. The role of edge computing is analyzed with respect to edge equipment, network communication, information fusion, and cooperative mechanism with cloud computing. Zhang et al. [15] proposes the edge-based architecture and implementation for a smart home. The system consists of three distinct layers: the sensor layer, the edge layer, and the cloud layer.

Generally speaking, Wei Yu et al. divides the structure of edge computing into three aspects: the front-end, near-end, and far-end (see Figure 2.2). A detailed description is given below:

The front end consists of heterogeneous end devices (e.g. smartphones, sensors, actuators), which are deployed at the front end of the edge computing structure. This layer provides real-time responsiveness and local authority for the end-users. Nevertheless, the front-end environment provides more interaction by keeping the heaviest traffic and processing closest to the end-user devices. However, due to the limited resource capabilities provided by end devices, it is clear that not all requirements can be met by this layer. Thus, in such situations, the end devices must forward the resource requirements to the more powerful devices, such as fog node or cloud computing data centers.

The near end will support most of the traffic flows in the networks. This layer provides more powerful devices, which means that most of the data processing and storage will be migrated to the near-end environment. Additionally, many tasks like caching, device management, and privacy protection can be deployed at this layer. By increasing the distance between the source of data and its processing destination (e.g. fog node) it also increases the latency due to the round-trip journey. However, the latency is very low since the devices are one hop away from the source where the data is produced and consumed. Figure 2.2 An overview of edge computing architecture [16]. (See color plate section for the color representation of this figure)

The far end environment is cloud servers that are deployed farther away from the end devices. This layer provides devices with high processing capabilities and more data storage. Additionally, it can be configured to provide levels of performance, security, and control for both users and service providers. Nevertheless, the far-end layer enables any user to access unimaginable computing power where thousands of servers may be orchestrated to focus on the task, such as in [17, 18]. However, one must note that the transmission latency is increased in the networks by increasing the distance that data has to travel.

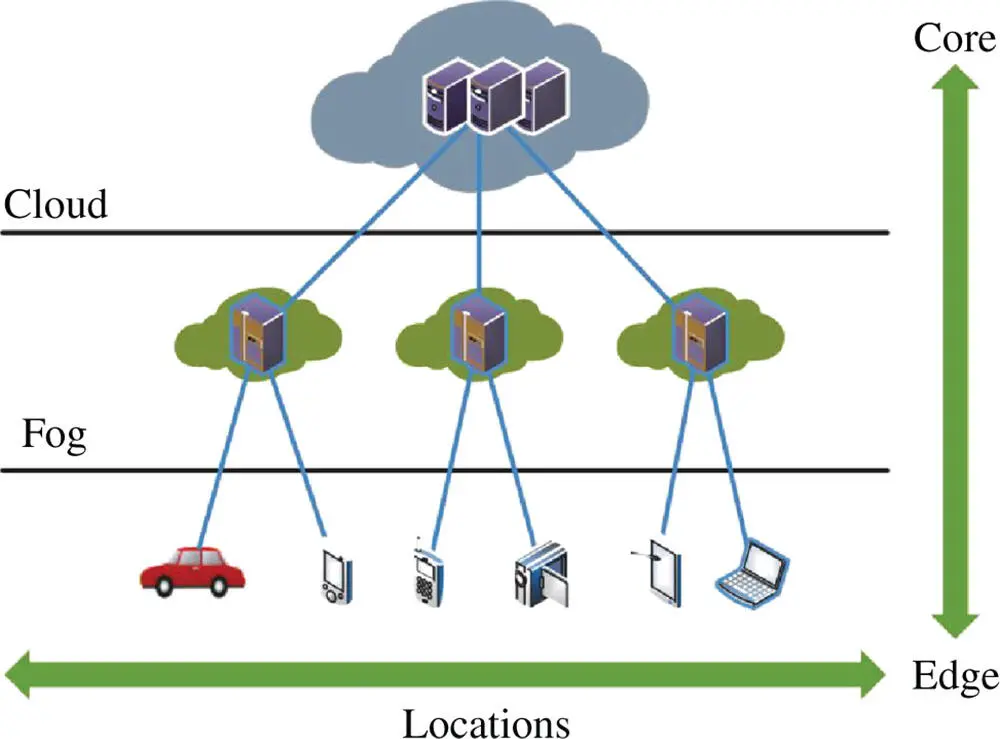

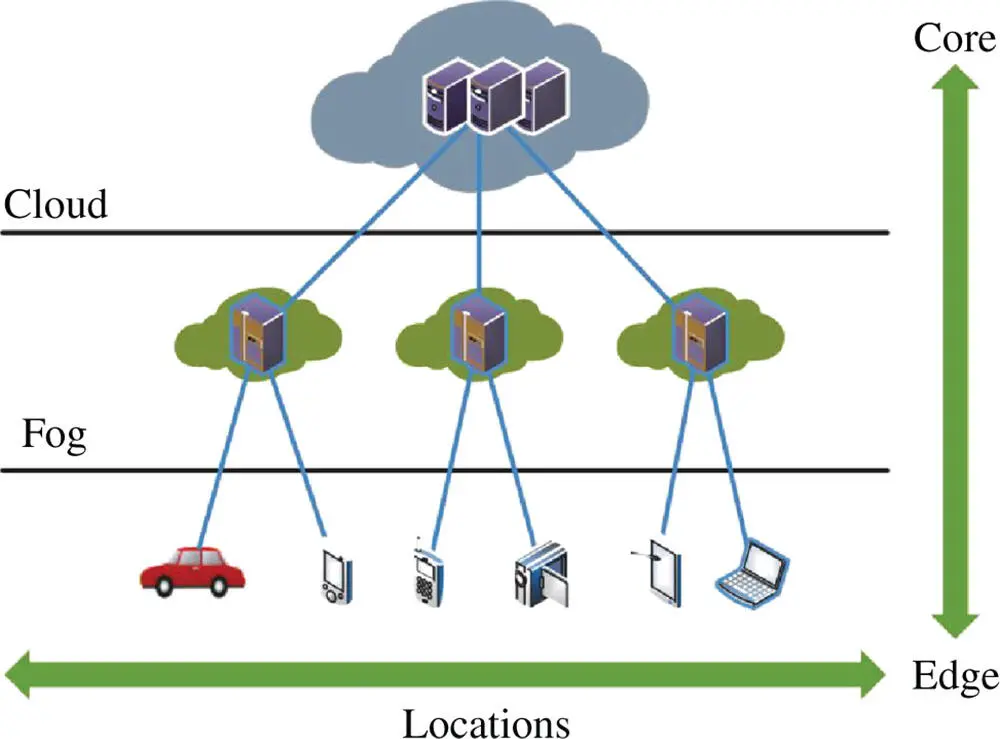

Fog computing is a platform introduced by Cisco [10] with the purpose of extending the cloud capabilities closer to the edge of the network. Multiple definitions of fog computing are found in the literature; however, the most relevant is presented in [19]. According to them, fog computing is a geographically distributed computing architecture connected to multiple heterogeneous devices at the edge of the network, but at the same time not exclusively seamlessly backed by cloud services. Hence, we envision fog as a bridge between the cloud and the edge of the network that aims to facilitate the deployment of the newly emerging IoT applications (see Figure 2.3).

A fog device is a highly virtualized IoT node that provides computing, storage, and network services between edge devices and cloud [2]. Such characteristics are found in the cloud as well; thus, a fog device can be characterized as a mini-cloud which utilizes its own resources in combination with data collected from the edge devices and the vast computational resources that cloud offers.

Figure 2.3 Fog computing a bridge between cloud and edge [20].

By migrating computational resources closer to the end devices, fog offers the possibility to customers to develop and deploy new latency-sensitive applications directly on devices like routers, switches, small data centers, and access points. Depending on the application, this can impose stringent requirements, which refers to fast response time and predictable latency (i.e. smart connected vehicles, augmented reality), location awareness (e.g., sensor networks to monitor the environment) and large-scale distributed systems (smart traffic light, smart grids). As a result, the cloud cannot fulfill all of these demands by itself.

To fill the technological gap in the current state of the art where the cloud computing paradigm is at the center, the fog collaborates with the cloud to form a more scalable and stable system across all edge devices suitable for IoT applications. From this union, the developer benefits the most since he can decide where is the most beneficial, fog or cloud, to deploy a function of his application. For example, taking advantage of the fog node capabilities, we can process and filter streams of collected data coming from heterogeneous devices, located in different areas, taking real-time decisions and lowering the communication network to the cloud. Thus, fog computing introduces effective ways of overcoming many limitations that cloud is facing [1]. These limitations are:

Читать дальше