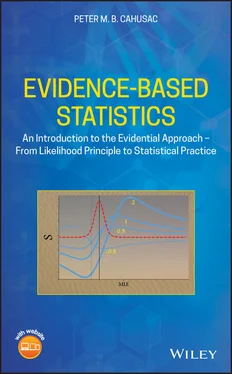

At the bottom of Figure 1.1is shown the likelihood function. This is none other than a rescaled sampling distribution that we saw around the null value. It is calculated from the data, specifically from the sample mean and variance. It contains all the information that we can extract from the data. It is centred on the sample mean which represents the maximum likelihood estimate (MLE) for the population mean. The likelihood function can then be used to compare different hypothesis parameter values. Using simply the height of the curve, the likelihood function allows us to calculate the relative likelihood, in terms of a ratio, for any two parameter values from competing hypotheses. We may compare any value of interest with the null. For example, we may take a value that represents a value that is of practical importance. This might be situated above or below the sample mean value. If this value lies between the null and the sample mean, then the ratio relative to the null will be ≥1. If the value is less than the null, then the ratio will be <1. The same will be true on the other side of the sample mean until the counternull 2 value is reached, after which the ratio will be ≤1. The maximum LR is obtained at the sample mean value. For the illustrated data, this ratio was 13.4, giving an S of 2.6. The evidence represented by the likelihood function is centred on the observed data statistic. The same function centred on the null, as used in significance testing, now seems somewhat artificial.

The likelihood interval shown in Figure 1.1represents that calculated for a support of 2 ( S -2), which closely resembles the 95% confidence interval, although its interpretation is more direct: values within the interval are consistent with the collected data. A value outside the interval has at least one hypothesis value, here the sample mean, that has more than moderate evidence against it.

The precise meaning of p values obtained in statistical tests is difficult to grasp by the average scientist. Even seasoned researchers misunderstand them. In contrast, the likelihood approach is conceptually simple. It uses the likelihood function, derived from the sampling distribution of the collected data, to provide comparative evidence for two specified hypotheses. The likelihood approach uses nothing other than the evidence obtained in the collected sample. For p values, the tail regions of the sampling distribution centred on the null are used. These regions include values beyond the sample statistic which were not observed. What can be the justification for including values that were not observed? Later in his career, Fisher [27], p. 71 admits that ‘This feature is indeed not very defensible save as an approximation’. ‘To what?’ replies Edwards [28]. It is interesting that Fisher then proceeds to compare likelihoods (pp. 71–73) ‘It would, however, have been better to have compared the different possible values of p , in relation to the frequencies with which the actual values observed would have been produced by them, as is done by the Mathematical Likelihood …’ Concluding ‘The likelihood supplies a natural order of preference among the possibilities under consideration’. The use of the LR is computationally simple and intuitively attractive. Tsou and Royall observe ‘Strong theoretical arguments imply that for directly representing and interpreting statistical data as evidence, the proper vehicle is the likelihood function’. Adding pointedly ‘These arguments have had limited impact on statistical practice’ [29]. Perhaps the ritualized [30] and over-rehearsed use of p values have made them so ingrained in the scientific community, that the conceptually simpler LR statistic has now become more difficult to grasp.

1.2.3 Types of Approach Using Likelihoods

A key feature of the evidential approach is the use of LR based upon two values selected by the researcher. The LR then reveals which value is best supported by the observations. Typically, one of these values is the null hypothesis and the other a value believed to represent an effect size (see below Section 1.3). The use of explicit hypothesis values has the effect of strengthening the inferences made from the ratio of their likelihoods. This more useful approach can be used in many different types of analyses, notably in testing means with t , analysis of variance (ANOVA) using contrasts, correlation, in categorical data using binomial, Poisson, and odds ratio. Where precise hypothesis values cannot easily be specified, it is useful to use likelihood intervals to show which values are supported by the data.

When one of the chosen values is the MLE, for example the sample mean, then something called the maximum LR is calculated (see Section 2.1.5). This is equivalent to using p values since there is a direct transformation between it and a p value – they both measure evidence against a single value, usually represented by the null hypothesis. This can be useful in providing the maximum possible LR, and hence support, for an effect against another specified value such as the null.

1.2.4 Pros and Cons of Likelihood Approach

Advantages:

1 Provides an objective measure of evidence between competing hypotheses, unaffected by the intentions of the investigator.

2 Calculations tend to be simpler, and are often based on commonly used statistics such as z, t, and F.

3 Likelihoods, LRs and support (log LR) can be calculated directly from the data. Statistical tables and critical values are not required.

4 The scale for support values is intuitively easy to understand ranging from −∞ to +∞. Positive values represent support for primary hypothesis, negative values represent support for secondary hypothesis. 3 Zero represents no evidence for either hypothesis.

5 Support values are proportional to the quantity of data, representing the weight of evidence. This means that support values from independent studies can simply be added together.

6 Collecting additional data in a study will tend to strengthen the support for the hypothesis closest to the true value. By contrast with p values, even when the null hypothesis is true, additional data will always eventually give statistically significant results, to any level of α (0.05, 0.01, 0.001, etc.) required.

7 Categorical data analyses are not restricted by normality assumptions, and within a given model, support values sum algebraically.

8 It is versatile and has unlimited flexibility for model comparisons.

9 The stronger the evidence the less likely it is to be misleading because S has a universal bound of e−m of observing misleading evidence, where m is the support for any two hypotheses.

10 Unlike other approaches based on probabilities, it is unaffected by transformations of variables.

1 Does not have a specific threshold between statistically unimportant and important.

2 Few major statistical packages support the evidential approach.

3 Few statistics curricula include the evidential approach.

4 Hence, few researchers are familiar with the evidential approach.

1.3 Effect Size – True If Huge!

Breaking news! Massive story! Huge if true! These are phrases used in media headlines to report the latest outrage or scoop. How do we decide how big the story is? Well, there may be several dimensions: timing (e.g. novelty), proximity (cultural and geographical), prominence (celebrities), and magnitude (e.g. number of deaths). In science, the issues of effect size and impact may be more prosaic but are actually of great importance. Indeed, this issue has been sadly neglected in statistical teaching and practice. Too much emphasis has been put on whether a result is statistically significant or not. As Cohen [31] observed ‘The primary product of a research inquiry is one or more measures of effect size, not p values’. We need to ask what is the effect size, and how we measure it.

Читать дальше