We denote:

– [X] = [xl, x2, ... , xn]: the set of all the symbols at the input of the channel;

– [y] = [yi, ... , ym]: the set of all the symbols at the output of the channel;

– [P(X)] = [p(x1), p(x2), ...,p(xn)]: the vector of probability of symbols at the input of the channel;

– [P(Y)] = [p(yi), p(y2), ... , p(ym)]: the vector of probability of symbols at the output of the channel.

Because of the perturbations, the space [ Y ] can be different from the space [ X ], and the probabilities P ( Y ) can be different from the probabilities P ( X ).

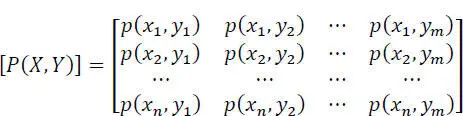

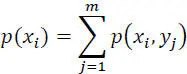

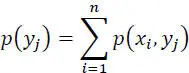

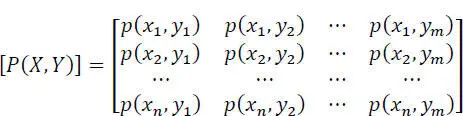

We define a product space [ X • Y ] and we introduce the matrix of the probabilities of the joint symbols, input-output [ P ( X, Y )]:

[2.20]

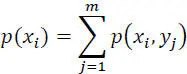

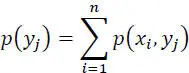

We deduce, from this matrix of probabilities:

[2.21]

[2.22]

We then define the following entropies:

– the entropy of the source:[2.23]

– the entropy of variable Y at the output of the transmission channel:[2.24]

– the entropy of the two joint variables (X, Y)Because of the disturbances in the transmission channel, if the symbolinput-output:[2.25]

2.5.1. Conditional entropies

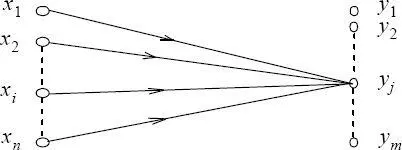

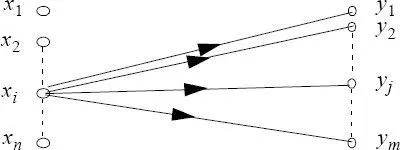

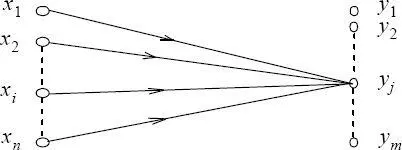

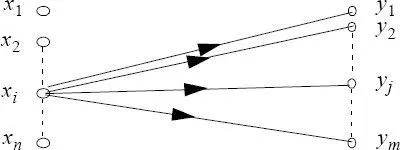

Because of the disturbances in the transmission channel, if the symbol yj appears at the output, there is an uncertainty on the symbol xi, j = 1, ... , ,n which has been sent.

Figure 2.3. Ambiguity on the symbol at the input when yj is received

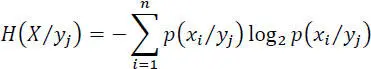

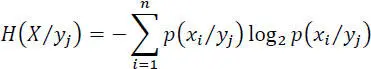

The average value of this uncertainty, or the entropy associated with the receipt of the symbol yj , is:

[2.26]

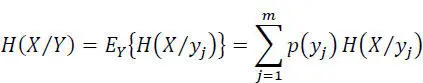

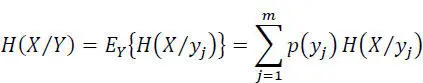

The mean value of this entropy for all the possible symbols yj received is:

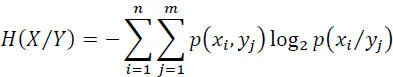

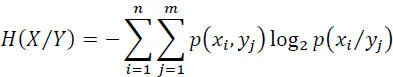

[2.27]

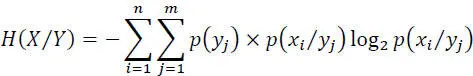

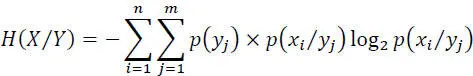

Which can be written as:

[2.28]

or:

[2.29]

The entropy H ( X/Y ) is called equivocation (ambiguity) and corresponds to the loss of information due to disturbances (as I ( X, Y ) = H ( X )− H ( X/Y )). This will be specified a little further.

Because of disturbances, if the symbol xi is issued, there is uncertainty about the received symbol yj, j = 1, ... , m .

Figure 2.4. Uncertainty on the output when we know the input

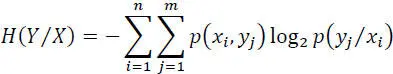

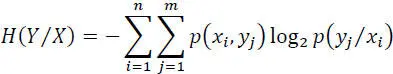

The entropy of the random variable Y at the output knowing the X at the input is:

[2.30]

This entropy is a measure of the uncertainty on the output variable when that of the input is known .

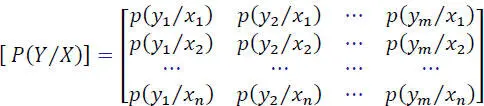

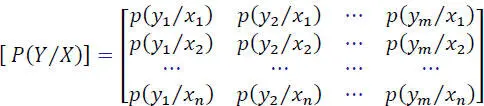

The matrix P(Y/X) is called the channel noise matrix:

[2.31]

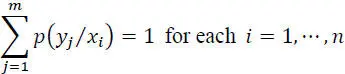

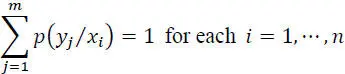

A fundamental property of this matrix is:

[2.32]

Where: p ( yj / xi ) is the probability of receiving the symbol yj when the symbol xi has been emitted.

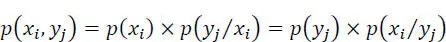

In addition, one has:

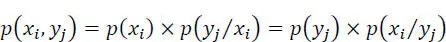

[2.33]

with:

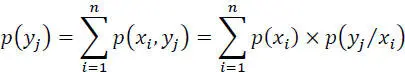

[2.34]

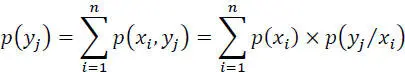

p ( yj ) is the probability of receiving the symbol yj whatever the symbol xi emitted, and:

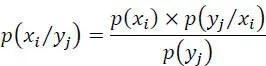

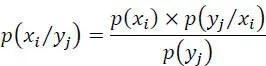

[2.35]

p ( xi / yj ) is the probability that the symbol xi was issued when the symbol yj is received.

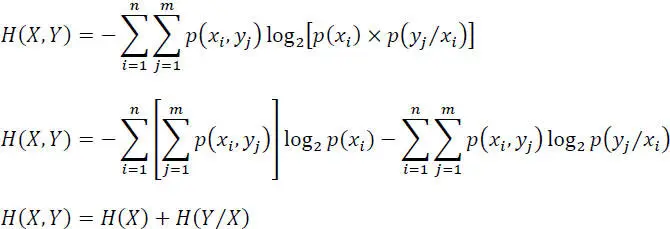

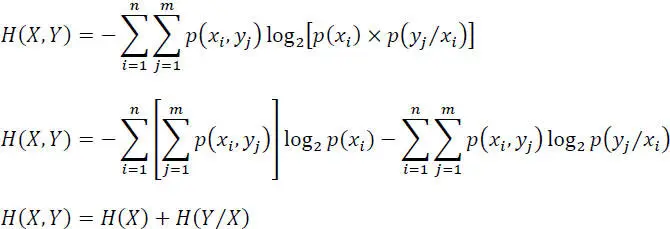

2.5.2. Relations between the various entropies

We can write:

[2.36]

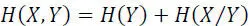

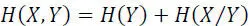

In the same way, as one has: H ( Y, X ) = H ( X, Y ), therefore:

[2.37]

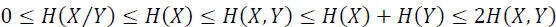

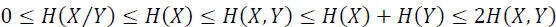

In addition, one has the following inequalities:

[2.38]

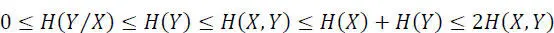

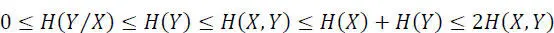

and similarly:

[2.39]

SPECIAL CASES.–

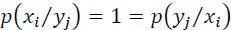

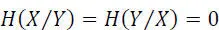

– Noiseless channel: in this case, on receipt of yj , there is certainty about the symbol actually transmitted, called xi (one-to-one correspondence), therefore:

[2.40]

Consequently:

[2.41]

Читать дальше