Chris McCain - Mastering VMware® Infrastructure3

Здесь есть возможность читать онлайн «Chris McCain - Mastering VMware® Infrastructure3» — ознакомительный отрывок электронной книги совершенно бесплатно, а после прочтения отрывка купить полную версию. В некоторых случаях можно слушать аудио, скачать через торрент в формате fb2 и присутствует краткое содержание. Город: Indianapolis, Год выпуска: 2008, ISBN: 2008, Издательство: WILEY Wiley Publishing, Inc., Жанр: Программы, ОС и Сети, на английском языке. Описание произведения, (предисловие) а так же отзывы посетителей доступны на портале библиотеки ЛибКат.

- Название:Mastering VMware® Infrastructure3

- Автор:

- Издательство:WILEY Wiley Publishing, Inc.

- Жанр:

- Год:2008

- Город:Indianapolis

- ISBN:978-0-470-18313-7

- Рейтинг книги:5 / 5. Голосов: 1

-

Избранное:Добавить в избранное

- Отзывы:

-

Ваша оценка:

- 100

- 1

- 2

- 3

- 4

- 5

Mastering VMware® Infrastructure3: краткое содержание, описание и аннотация

Предлагаем к чтению аннотацию, описание, краткое содержание или предисловие (зависит от того, что написал сам автор книги «Mastering VMware® Infrastructure3»). Если вы не нашли необходимую информацию о книге — напишите в комментариях, мы постараемся отыскать её.

Mastering VMware® Infrastructure3 — читать онлайн ознакомительный отрывок

Ниже представлен текст книги, разбитый по страницам. Система сохранения места последней прочитанной страницы, позволяет с удобством читать онлайн бесплатно книгу «Mastering VMware® Infrastructure3», без необходимости каждый раз заново искать на чём Вы остановились. Поставьте закладку, и сможете в любой момент перейти на страницу, на которой закончили чтение.

Интервал:

Закладка:

In addition to configuring zoning at the fibre channel switches, LUNs must be presented, or not presented, to an ESX Server. This process of LUN masking, or hiding LUNs from a fibre channel node, is another means of ensuring that a server does not have access to a LUN. As the name implies, this is done at the LUN level inside the storage device and not on the fibre channel switch. More specifically, the storage processor (SP) on the storage device allows for LUNs to be made visible or invisible to the fibre channel nodes that are available based on the zoning configuration. The hosts with LUNs that have been masked are not allowed to store or retrieve data from those LUNs.

Zoning provides security at a higher, more global level, whereas LUN masking is a more granular approach to LUN security and access control. The zoning and LUN masking strategies of your fibre channel network will have a significant impact on the functionality of your virtual infrastructure. You will learn in Chapter 9 that LUN access is critical to the advanced VMotion, DRS, and HA features of VirtualCenter.

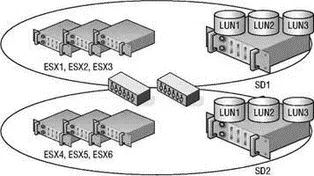

Figure 4.13 shows a fibre channel switch fabric with multiple storage devices and LUNs configured on each storage device. Table 4.3 describes a LUN access matrix that could help a storage administrator and VI3 administrator work collaboratively on planning the zoning and LUN masking strategies.

Figure 4.13A fibre channel network consists of multiple hosts, multiple storage devices, and LUNs across each storage device. Every host does not always need access to every storage device or every LUN, so zoning and masking are a critical part of SAN design and configuration.

Fibre channel storage networks are synonymous with “high performance” storage systems. Arguably, this is in large part due to the efficient manner in which communication is managed by the fibre channel switches. Fibre channel switches work intelligently to reduce, if not eliminate, oversubscription problems in which multiple links are funnelled into a single link. Oversubscription results in information being dropped. With less loss of data on fibre channel networks, there is reduced need for retransmission of data and, in turn, processing power becomes available to process new storage requests instead of retransmitting old requests.

Since fibre channel storage is currently the most efficient SAN technology, it is a common back-end to a VI3 environment. ESX has native support for connecting to fibre channel networks through the host bus adapter. However, ESX Server has limited support for the available storage devices and host bus adapters. Before investing in a SAN, make sure it is compatible and supported by VMware. Even if the SAN is capable of "working" with ESX, it does not mean VMware is going to provide support. VMware is very stringent with the hardware support for VI3; therefore, you should always implement hardware that has been tested by VMware.

Table 4.3: LUN Access Matrix

| Host | SD1 | SD2 | LUN1 | LUN2 | LUN3 |

|---|---|---|---|---|---|

| ESX1 | Yes | No | Yes | Yes | No |

| ESX2 | Yes | No | No | Yes | Yes |

| ESX3 | Yes | No | Yes | Yes | Yes |

| ESX4 | No | Yes | Yes | Yes | Yes |

| ESX5 | No | Yes | Yes | No | Yes |

| ESX6 | No | Yes | Yes | No | Yes |

Note: The processes of zoning and masking can be facilitated by generating a matrix that defines which hosts should have access to which storage devices and which LUNs.

Always check the compatibility guides before adding new servers, new hardware, or new storage devices to your virtual infrastructure.

Since VMware is the only company (at this time) that provides drivers for hardware supported by ESX, you must be cautious when adding new hardware like host bus adapters. The bright side, however, is that so long as you opt for a VMware-supported HBA, you can be certain it will work without incurring any of the driver conflicts or misconfiguration common in other operating systems.

You can find a complete list of compatible SAN devices online on VMware's website at http://www.vmware.com/pdf/vi3_san_guide.pdf. Be sure to check the guides regularly as they are consistently updated. When testing a fibre channel SAN against ESX, VMware identifies compatibility in all of the following areas:

♦ Basic connectivity to the device.

♦ Multipathing capability for allowing access to storage via different paths.

♦ Host bus adapter (HBA) failover support for eliminating single point of failure at the HBA.

♦ Storage port failover capability for eliminating single point of failure on the storage device.

♦ Support for Microsoft Clustering Services (MSCS) for building server clusters when the guest operating system is Windows 2000 Service Pack 4 or Windows 2003.

♦ Boot-from-SAN capability for booting an ESX server from a SAN LUN.

♦ Point-to-point connectivity support for nonswitch-based fibre channel network configurations.

Naturally, since VMware is owned by EMC Corporation you can find a great deal of compatibility between ESX Server and the EMC line of fibre channel storage products (also sold by Dell). Each of the following vendors provides storage products that have been tested by VMware:

♦ 3PAR: http://www.3par.com

♦ Bull: http://www.bull.com

♦ Compellent: http://www.compellent.com

♦ Dell: http://www.dell.com

♦ EMC: http://www.emc.com

♦ Fujitsu/Fujitsu Siemens: http://www.fujitsu.com and http://www.fujitsu-siemens.com

♦ HP: http://www.hp.com

♦ Hitachi/Hitachi Data Systems (HDS): http://www.hitachi.com and http://www.hds.com

♦ IBM: http://www.ibm.com

♦ NEC: http://www.nec.com

♦ Network Appliance (NetApp): http://www.netapp.com

♦ Nihon Unisys: http://www.unisys.com

♦ Pillar Data: http://www.pillardata.com

♦ Sun Microsystems: http://www.sun.com

♦ Xiotech: http://www.xiotech.com

Although the nuances, software, and practices for managing storage devices across different vendors will most certainly differ, the concepts of SAN storage covered in this book transcend the vendor boundaries and can be used across various platforms.

Currently, ESX Server supports many different QLogic 236x and 246x fibre channel HBAs for connecting to fibre channel storage devices. However, because the list can change over time, you should always check the compatibility guides before purchasing and installing a new HBA.

It certainly does not make sense to make a significant financial investment in a fibre channel storage device and still have a single point of failure at each server in the infrastructure. We recommend that you build redundancy into the infrastructure at each point of potential failure. As shown in the diagrams earlier in the chapter, each ESX Server host should be equipped with a minimum of two fibre channel HBAs to provide redundant path capabilities in the event of HBA failure. ESX Server 3 supports a maximum of 16 HBAs per system and a maximum of 15 targets per HBA. The 16-HBA maximum can be achieved with four quad-port HBAs or eight dual-port HBAs provided that the server casing has the expansion capability.

Adding a new HBA requires that the physical server be turned off since ESX Server does not support adding hardware while the server is running, otherwise known as a ‘‘hot add’’ of hardware. Figure 4.14 displays the redundant HBA and storage processor (SP) configuration of a VI3 environment.

Читать дальшеИнтервал:

Закладка:

Похожие книги на «Mastering VMware® Infrastructure3»

Представляем Вашему вниманию похожие книги на «Mastering VMware® Infrastructure3» списком для выбора. Мы отобрали схожую по названию и смыслу литературу в надежде предоставить читателям больше вариантов отыскать новые, интересные, ещё непрочитанные произведения.

Обсуждение, отзывы о книге «Mastering VMware® Infrastructure3» и просто собственные мнения читателей. Оставьте ваши комментарии, напишите, что Вы думаете о произведении, его смысле или главных героях. Укажите что конкретно понравилось, а что нет, и почему Вы так считаете.