where:

C i = worst case execution time associated with periodic task I

T i = period associated with task i

B i = the longest duration of blocking that can be experienced by I

n = number of tasks

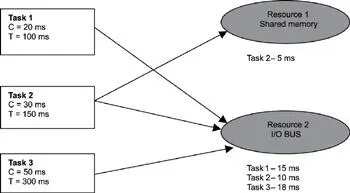

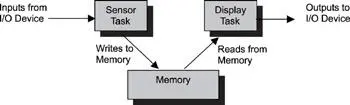

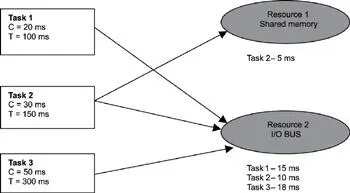

This equation is best demonstrated with an example. This example uses the same three tasks provided in Table 14.3 and inserts two shared resources, as shown in Figure 14.7. In this case, the two resources represent a shared memory (resource #1) and an I/O bus (resource #2).

Figure 14.7: Example setup for extended RMA.

Task #1 makes use of resource #2 for 15ms at a rate of once every 100ms. Task #2 is a little more complex. It is the only task that uses both resources. Resource #1 is used for 5ms, and resource #2 is used for 10ms. Task #2 must run at a rate of once every 150ms.

Task #3 has the lowest frequency of the tasks and runs once every 300ms. Task #3 also uses resource #2 for 18ms.

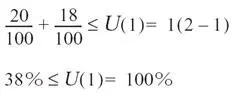

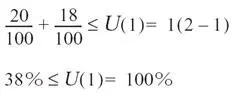

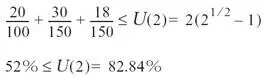

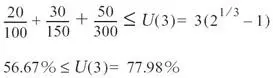

Now looking at schedulability, Equation 14.2 yields three separate equations that must be verified against a utility bound. Let's take a closer look at the first equation

Either task #2 or task #3 can block task #1 by using resource #2. The blocking factor B 1 is the greater of the times task #2 or task #3 holds the resource, which is 18ms, from task #3. Applying the numbers to Equation 14.2, the result is below the utility bound of 100% for task #1. Hence, task #1 is schedulable.

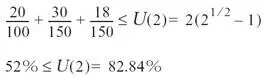

Looking at the second equation, task #2 can be blocked by task #3. The blocking factor B 2 is 18ms, which is the time task #3 has control of resource #2, as shown

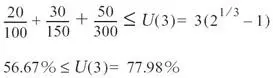

Task #2 is also schedulable as the result is below the utility bound for two tasks. Now looking at the last equation, note that B n is always equal to 0. The blocking factor for the lowest level task is always 0, as no other tasks can block it (they all preempt it if they need to), as shown

Again, the result is below the utility bound for the three tasks, and, therefore, all tasks are schedulable.

Other extensions are made to basic RMA for dealing with the rest of the assumptions associated with basic RMA, such as accounting for aperiodic tasks in real-time systems. Consult the listed references for additional readings on RMA and related materials.

Some points to remember include the following:

· An outside-in approach can be used to decompose applications at the top level.

· Device dependencies can be used to decompose applications.

· Event dependencies can be used to decompose applications.

· Timing dependencies can be used to decompose applications.

· Levels of criticality of workload involved can be used to decompose applications.

· Functional cohesion, temporal cohesion, or sequential cohesion can be used either to form a task or to combine tasks.

· Rate Monotonic Scheduling can be summarized by stating that a task's priority depends on its period-the shorter the period, the higher the priority. RMS, when implemented appropriately, produces stable and predictable performance.

· Schedulability analysis only looks at how systems meet temporal requirements, not functional requirements.

· Six assumptions are associated with the basic RMA:

○ all of the tasks are periodic,

○ the tasks are independent of each other and that no interactions occur among tasks,

○ a task's deadline is the beginning of its next period,

○ each task has a constant execution time that does not vary over time,

○ all of the tasks have the same level of criticality, and

○ aperiodic tasks are limited to initialization and failure recovery work and that these aperiodic tasks do not have hard deadlines.

· Basic RMA does not account for task synchronization and aperiodic tasks.

Chapter 15: Synchronization And Communication

Software applications for real-time embedded systems use concurrency to maximize efficiency. As a result, an application's design typically involves multiple concurrent threads, tasks, or processes. Coordinating these activities requires inter-task synchronization and communication.

This chapter focuses on:

· resource synchronization,

· activity synchronization,

· inter-task communication, and

· ready-to-use embedded design patterns.

Synchronization is classified into two categories: resource synchronization and activity synchronization. Resource synchronization determines whether access to a shared resource is safe, and, if not, when it will be safe. Activity synchronization determines whether the execution of a multithreaded program has reached a certain state and, if it hasn't, how to wait for and be notified when this state is reached.

15.2.1 Resource Synchronization

Access by multiple tasks must be synchronized to maintain the integrity of a shared resource. This process is called resource synchronization, a term closely associated with critical sections and mutual exclusions.

Mutual exclusion is a provision by which only one task at a time can access a shared resource. A critical section is the section of code from which the shared resource is accessed.

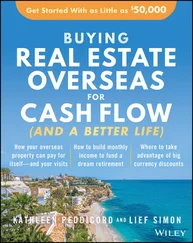

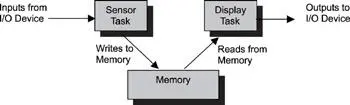

As an example, consider two tasks trying to access shared memory. One task (the sensor task) periodically receives data from a sensor and writes the data to shared memory. Meanwhile, a second task (the display task) periodically reads from shared memory and sends the data to a display. The common design pattern of using shared memory is illustrated in Figure 15.1.

Figure 15.1: Multiple tasks accessing shared memory.

Problems arise if access to the shared memory is not exclusive, and multiple tasks can simultaneously access it. For example, if the sensor task has not completed writing data to the shared memory area before the display task tries to display the data, the display would contain a mixture of data extracted at different times, leading to erroneous data interpretation.

The section of code in the sensor task that writes input data to the shared memory is a critical section of the sensor task. The section of code in the display task that reads data from the shared memory is a critical section of the display task. These two critical sections are called competing critical sections because they access the same shared resource.

A mutual exclusion algorithm ensures that one task's execution of a critical section is not interrupted by the competing critical sections of other concurrently executing tasks.

One way to synchronize access to shared resources is to use a client-server model, in which a central entity called a resource server is responsible for synchronization. Access requests are made to the resource server, which must grant permission to the requestor before the requestor can access the shared resource. The resource server determines the eligibility of the requestor based on pre-assigned rules or run-time heuristics.

Читать дальше