Lastly, to further reduce energy consumption, another opportunity lies at redesigning sensor hardware to reduce the energy consumption related to sensing. When collecting data from onboard sensors, a large portion of the energy is consumed by the analog-to-digital converter (ADC). There are early works that explored the feasibility of removing ADC and directly using analog sensor signals as inputs for DNN models [20]. Their promising results demonstrate the significant potential of this research direction.

3.2.4 Heterogeneity in Sensor Data

Many edge devices are equipped with more than one onboard sensor. For example, a smartphone has a global positioning system (GPS) sensor to track geographical locations, an accelerometer to capture physical movements, a light sensor to measure ambient light levels, a touchscreen sensor to monitor users' interactions with their phones, a microphone to collect audio information, and a camera to capture images and videos. Data obtained by these sensors are by nature heterogeneous and are diverse in format, dimensions, sampling rates, and scales. How to take the data heterogeneity into consideration to build DNN models and to effectively integrate the heterogeneous sensor data as inputs for DNN models represents a significant challenge.

To address this challenge, one opportunity lies at building a multimodal deep learning model that takes data from different sensing modalities as its inputs. For example, [21] proposed a multimodal DNN model that uses restricted Boltzmann machine (RBM) for activity recognition. Similarly, [22] also proposed a multimodal DNN model for smartwatch-based activity recognition. Besides building multimodal DNN models, another opportunity lies in combining information from heterogeneous sensor data extracted at different dimensions and scales. As an example, [23] proposed a multiresolution deep embedding approach for processing heterogeneous data at different dimensions. [24] proposed an integrated convolutional and recurrent neural networks (RNNs) for processing heterogeneous data at different scales.

3.2.5 Heterogeneity in Computing Units

Besides data heterogeneity, edge devices are also confronted with heterogeneity in on-device computing units. As computing hardware becomes more and more specialized, an edge device could have a diverse set of onboard computing units including traditional processors such as central processing units (CPUs), digital signal processing (DSP) units, graphics processing units (GPUs), and field-programmable gate arrays (FPGAs), as well as emerging domain-specific processors such as Google's Tensor Processing Units (TPUs). Given the increasing heterogeneity in onboard computing units, mapping deep learning tasks and DNN models to the diverse set of onboard computing units is challenging.

To address this challenge, the opportunity lies at mapping operations involved in DNN model executions to the computing unit that is optimized for them. State-of-the-art DNN models incorporate a diverse set of operations but can be generally grouped into two categories: parallel operations and sequential operations. For example, the convolution operations involved in convolutional neural networks (CNNs) are matrix multiplications that can be efficiently executed in parallel on GPUs that have the optimized architecture for executing parallel operations. In contrast, the operations involved in RNNs have strong sequential dependencies, and better-fit CPUs that are optimized for executing sequential operations where operator dependencies exist. The diversity of operations suggests the importance of building an architecture-aware compiler that is able to decompose a DNN models at the operation level and then allocate the right type of computing unit to execute the operations that fit its architecture characteristics. Such an architecture-aware compiler would maximize the hardware resource utilization and significantly improve the DNN model execution efficiency.

3.2.6 Multitenancy of Deep Learning Tasks

The complexity of real-world applications requires edge devices to concurrently execute multiple DNN models that target different deep learning tasks [25]. For example, a service robot that needs to interact with customers needs to not only track faces of individuals it interacts with, but also recognize their facial emotions at the same time. These tasks all share the same data inputs and the limited resources on the edge device. How to effectively share the data inputs across concurrent deep learning tasks and efficiently utilize the shared resources to maximize the overall performance of all the concurrent deep learning tasks is challenging.

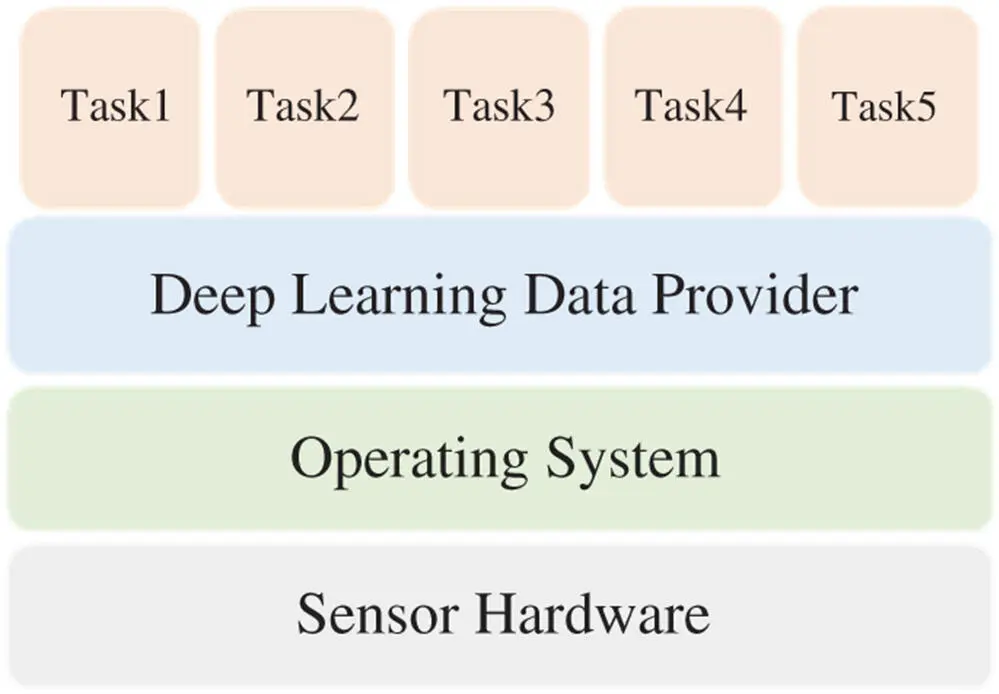

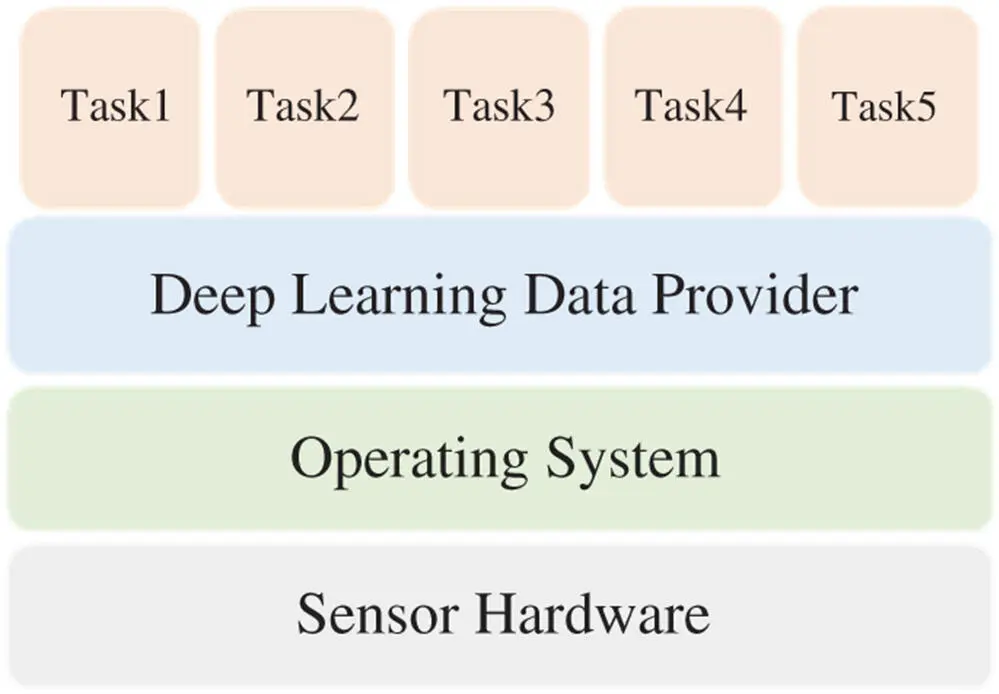

In terms of input data sharing, currently, data acquisition for concurrently running deep-learning tasks on edge devices is exclusive. In other words, at runtime, only one single deep learning task is able to access the sensor data inputs at one time. As a consequence, when there are multiple deep learning tasks running concurrently on edge devices, each deep learning task has to explicitly invoke system Application Programming Interfaces (APIs) to obtain its own data copy and maintain it in its own process space. This mechanism causes considerable system overhead as the number of concurrently running deep learning tasks increases. To address this input data sharing challenge, one opportunity lies at creating a data provider that is transparent to deep learning tasks and sits between them and the operating system as shown in Figure 3.2. The data provider creates a single copy of the sensor data inputs such that deep learning tasks that need to acquire data all access to this single copy for data acquisition. As such, a deep learning task is able to acquire data without interfering other tasks. More important, it provides a solution that scales in terms of the number of concurrently running deep learning tasks.

In terms of resource sharing, in common practice, DNN models are designed for individual deep learning tasks. However, existing works in deep learning show that DNN models exhibit layer-wise semantics where bottom layers extract basic structures and low-level features while layers at upper levels extract complex structures and high-level features. This key finding aligns with a subfield in machine learning named multitask learning [26]. In multitask learning, a single model is trained to perform multiple tasks by sharing low-level features while high-level features differ for different tasks. For example, a DNN model can be trained for scene understanding as well as object classification [27]. Multitask learning provides a perfect opportunity for improving the resource utilization for resource-limited edge devices when concurrently executing multiple deep learning tasks. By sharing the low-level layers of the DNN model across different deep learning tasks, redundancy across deep learning tasks can be maximally reduced. In doing so, edge devices can efficiently utilize the shared resources to maximize the overall performance of all the concurrent deep learning tasks.

Figure 3.2 Illustration of data sharing mechanism.

3.2.7 Offloading to Nearby Edges

For edge devices that have extremely limited resources such as low-end Internet of Things (IoT) devices, they may still not be able to afford executing the most memory and computation-efficient DNN models locally. In such a scenario, instead of running the DNN models locally, it is necessary to offload the execution of DNN models. As mentioned in the introduction section, offloading to the cloud has a number of drawbacks, including leaking user privacy and suffering from unpredictable end-to-end network latency that could affect user experience, especially when real-time feedback is needed. Considering those drawbacks, a better option is to offload to nearby edge devices that have ample resources to execute the DNN models.

Читать дальше