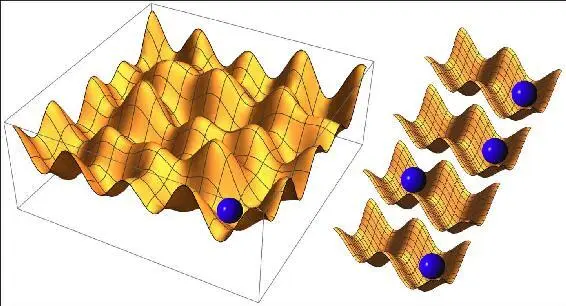

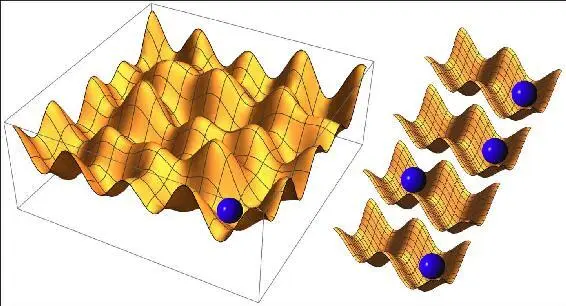

Figure 2.3: A physical object is a useful memory device if it can be in many different stable states. The ball on the left can encode four bits of information labeling which one of 2 4= 16 valleys it’s in. Together, the four balls on the right also encode four bits of information—one bit each.

Since two-state systems are easy to manufacture and work with, most modern computers store their information as bits, but these bits are embodied in a wide variety of ways. On a DVD, each bit corresponds to whether there is or isn’t a microscopic pit at a given point on the plastic surface. On a hard drive, each bit corresponds to a point on the surface being magnetized in one of two ways. In my laptop’s working memory, each bit corresponds to the positions of certain electrons, determining whether a device called a micro-capacitor is charged. Some kinds of bits are convenient to transport as well, even at the speed of light: for example, in an optical fiber transmitting your email, each bit corresponds to a laser beam being strong or weak at a given time.

Engineers prefer to encode bits into systems that aren’t only stable and easy to read from (as a gold ring), but also easy to write to: altering the state of your hard drive requires much less energy than engraving gold. They also prefer systems that are convenient to work with and cheap to mass-produce. But other than that, they simply don’t care about how the bits are represented as physical objects—and nor do you most of the time, because it simply doesn’t matter! If you email your friend a document to print, the information may get copied in rapid succession from magnetizations on your hard drive to electric charges in your computer’s working memory, radio waves in your wireless network, voltages in your router, laser pulses in an optical fiber and, finally, molecules on a piece of paper. In other words, information can take on a life of its own, independent of its physical substrate! Indeed, it’s usually only this substrate-independent aspect of information that we’re interested in: if your friend calls you up to discuss that document you sent, she’s probably not calling to talk about voltages or molecules. This is our first hint of how something as intangible as intelligence can be embodied in tangible physical stuff, and we’ll soon see how this idea of substrate independence is much deeper, including not only information but also computation and learning.

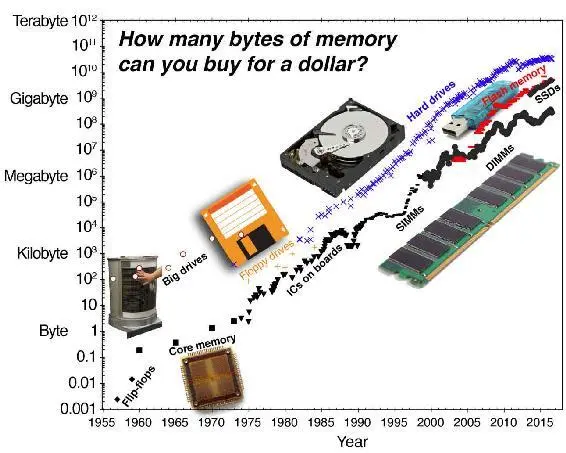

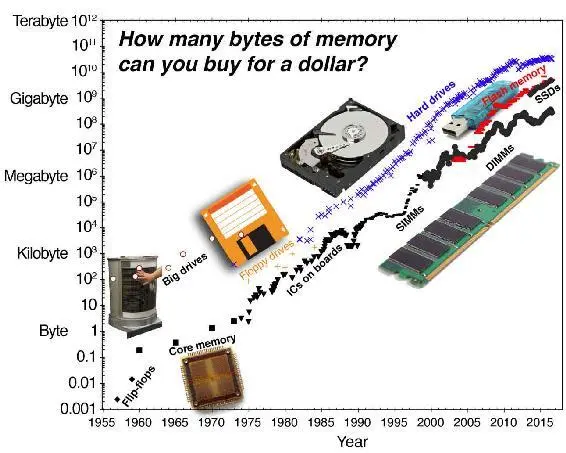

Because of this substrate independence, clever engineers have been able to repeatedly replace the memory devices inside our computers with dramatically better ones, based on new technologies, without requiring any changes whatsoever to our software. The result has been spectacular, as illustrated in figure 2.4: over the past six decades, computer memory has gotten half as expensive roughly every couple of years. Hard drives have gotten over 100 million times cheaper, and the faster memories useful for computation rather than mere storage have become a whopping 10 trillion times cheaper. If you could get such a “99.99999999999% off” discount on all your shopping, you could buy all real estate in New York City for about 10 cents and all the gold that’s ever been mined for around a dollar.

For many of us, the spectacular improvements in memory technology come with personal stories. I fondly remember working in a candy store back in high school to pay for a computer sporting 16 kilobytes of memory, and when I made and sold a word processor for it with my high school classmate Magnus Bodin, we were forced to write it all in ultra-compact machine code to leave enough memory for the words that it was supposed to process. After getting used to floppy drives storing 70kB, I became awestruck by the smaller 3.5-inch floppies that could store a whopping 1.44MB and hold a whole book, and then my first-ever hard drive storing 10MB—which might just barely fit a single one of today’s song downloads. These memories from my adolescence felt almost unreal the other day, when I spent about $100 on a hard drive with 300,000 times more capacity.

Figure 2.4: Over the past six decades, computer memory has gotten twice as cheap roughly every couple of years, corresponding to a thousand times cheaper roughly every twenty years. A byte equals eight bits. Data courtesy of John McCallum, from http://www.jcmit.net/memoryprice.htm.

What about memory devices that evolved rather than being designed by humans? Biologists don’t yet know what the first-ever life form was that copied its blueprints between generations, but it may have been quite small. A team led by Philipp Holliger at Cambridge University made an RNA molecule in 2016 that encoded 412 bits of genetic information and was able to copy RNA strands longer than itself, bolstering the “RNA world” hypothesis that early Earth life involved short self-replicating RNA snippets. So far, the smallest memory device known to be evolved and used in the wild is the genome of the bacterium Candidatus Carsonella ruddii, storing about 40 kilobytes, whereas our human DNA stores about 1.6 gigabytes, comparable to a downloaded movie. As mentioned in the last chapter, our brains store much more information than our genes: in the ballpark of 10 gigabytes electrically (specifying which of your 100 billion neurons are firing at any one time) and 100 terabytes chemically/biologically (specifying how strongly different neurons are linked by synapses). Comparing these numbers with the machine memories shows that the world’s best computers can now out-remember any biological system—at a cost that’s rapidly dropping and was a few thousand dollars in 2016.

The memory in your brain works very differently from computer memory, not only in terms of how it’s built, but also in terms of how it’s used. Whereas you retrieve memories from a computer or hard drive by specifying where it’s stored, you retrieve memories from your brain by specifying something about what is stored. Each group of bits in your computer’s memory has a numerical address, and to retrieve a piece of information, the computer specifies at what address to look, just as if I tell you “Go to my bookshelf, take the fifth book from the right on the top shelf, and tell me what it says on page 314.” In contrast, you retrieve information from your brain similarly to how you retrieve it from a search engine: you specify a piece of the information or something related to it, and it pops up. If I tell you “to be or not,” or if I google it, chances are that it will trigger “To be, or not to be, that is the question.” Indeed, it will probably work even if I use another part of the quote or mess things up somewhat. Such memory systems are called auto-associative, since they recall by association rather than by address.

In a famous 1982 paper, the physicist John Hopfield showed how a network of interconnected neurons could function as an auto-associative memory. I find the basic idea very beautiful, and it works for any physical system with multiple stable states. For example, consider a ball on a surface with two valleys, like the one-bit system in figure 2.3, and let’s shape the surface so that the x -coordinates of the two minima where the ball can come to rest are x = √2 ≈ 1 . 41421 and x = π ≈ 3 . 14159, respectively. If you remember only that π is close to 3, you simply put the ball at x = 3 and watch it reveal a more exact π -value as it rolls down to the nearest minimum. Hopfield realized that a complex network of neurons provides an analogous landscape with very many energy-minima that the system can settle into, and it was later proved that you can squeeze in as many as 138 different memories for every thousand neurons without causing major confusion.

Читать дальше