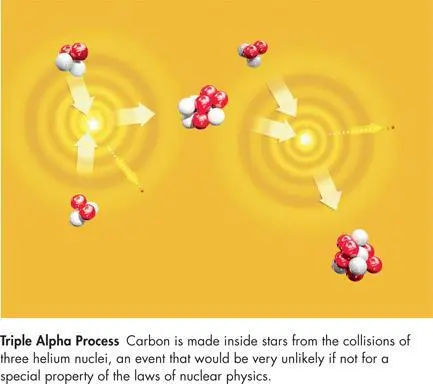

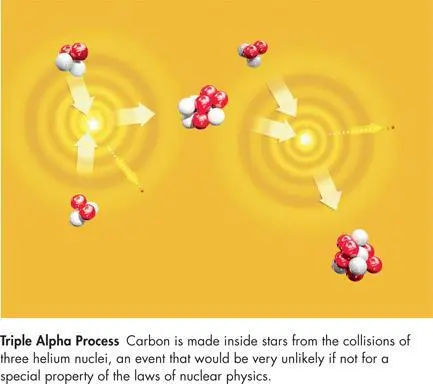

The situation changes when a star starts to run out of hydrogen. When that happens the star’s core collapses until its central temperature rises to about 100 million degrees Kelvin. Under those conditions, nuclei encounter each other so often that some beryllium nuclei collide with a helium nucleus before they have had a chance to decay. Beryllium can then fuse with helium to form an isotope of carbon that is stable. But that carbon is still a long way from forming ordered aggregates of chemical compounds of the type that can enjoy a glass of Bordeaux, juggle flaming bowling pins, or ask questions about the universe. For beings such as humans to exist, the carbon must be moved from inside the star to friendlier neighborhoods. That, as we’ve said, is accomplished when the star, at the end of its life cycle, explodes as a supernova, expelling carbon and other heavy elements that later condense into a planet.

This process of carbon creation is called the triple alpha process because “alpha particle” is another name for the nucleus of the isotope of helium involved, and because the process requires that three of them (eventually) fuse together. The usual physics predicts that the rate of carbon production via the triple alpha process ought to be quite small. Noting this, in 1952 Hoyle predicted that the sum of the energies of a beryllium nucleus and a helium nucleus must be almost exactly the energy of a certain quantum state of the isotope of carbon formed, a situation called a resonance, which greatly increases the rate of a nuclear reaction. At the time, no such energy level was known, but based on Hoyle’s suggestion, William Fowler at Caltech sought and found it, providing important support for Hoyle’s views on how complex nuclei were created.

Hoyle wrote, “I do not believe that any scientist who examined the evidence would fail to draw the inference that the laws of nuclear physics have been deliberately designed with regard to the consequences they produce inside the stars.” At the time no one knew enough nuclear physics to understand the magnitude of the serendipity that resulted in these exact physical laws. But in investigating the validity of the strong anthropic principle, in recent years physicists began asking themselves what the universe would have been like if the laws of nature were different. Today we can create computer models that tell us how the rate of the triple alpha reaction depends upon the strength of the fundamental forces of nature. Such calculations show that a change of as little as 0.5 percent in the strength of the strong nuclear force, or 4 percent in the electric force, would destroy either nearly all carbon or all oxygen in every star, and hence the possibility of life as we know it. Change those rules of our universe just a bit, and the conditions for our existence disappear!

By examining the model universes we generate when the theories of physics are altered in certain ways, one can study the effect of changes to physical law in a methodical manner. It turns out that it is not only the strengths of the strong nuclear force and the electromagnetic force that are made to order for our existence. Most of the fundamental constants in our theories appear fine-tuned in the sense that if they were altered by only modest amounts, the universe would be qualitatively different, and in many cases unsuitable for the development of life. For example, if the other nuclear force, the weak force, were much weaker, in the early universe all the hydrogen in the cosmos would have turned to helium, and hence there would be no normal stars; if it were much stronger, exploding supernovas would not eject their outer envelopes, and hence would fail to seed interstellar space with the heavy elements planets require to foster life. If protons were 0.2 percent heavier, they would decay into neutrons, destabilizing atoms. If the sum of the masses of the types of quark that make up a proton were changed by as little as 10 percent, there would be far fewer of the stable atomic nuclei of which we are made; in fact, the summed quark masses seem roughly optimized for the existence of the largest number of stable nuclei.

If one assumes that a few hundred million years in stable orbit are necessary for planetary life to evolve, the number of space dimensions is also fixed by our existence. That is because, according to the laws of gravity, it is only in three dimensions that stable elliptical orbits are possible. Circular orbits are possible in other dimensions, but those, as Newton feared, are unstable. In any but three dimensions even a small disturbance, such as that produced by the pull of the other planets, would send a planet off its circular orbit and cause it to spiral either into or away from the sun, so we would either burn up or freeze. Also, in more than three dimensions the gravitational force between two bodies would decrease more rapidly than it does in three dimensions. In three dimensions the gravitational force drops to ¼ of its value if one doubles the distance. In four dimensions it would drop to ⅛, in five dimensions it would drop to and so on. As a result, in more than three dimensions the sun would not be able to exist in a stable state with its internal pressure balancing the pull of gravity. It would either fall apart or collapse to form a black hole, either of which could ruin your day. On the atomic scale, the electrical forces would behave in the same way as gravitational forces. That means the electrons in atoms would either escape or spiral into the nucleus. In neither case would atoms as we know them be possible.

The emergence of the complex structures capable of supporting intelligent observers seems to be very fragile. The laws of nature form a system that is extremely fine-tuned, and very little in physical law can be altered without destroying the possibility of the development of life as we know it. Were it not for a series of startling coincidences in the precise details of physical law, it seems, humans and similar life-forms would never have come into being.

The most impressive fine-tuning coincidence involves the so-called cosmological constant in Einstein’s equations of general relativity. As we’ve said, in 1915, when he formulated the theory, Einstein believed that the universe was static, that is, neither expanding nor contracting. Since all matter attracts other matter, he introduced into his theory a new antigravity force to combat the tendency of the universe to collapse onto itself. This force, unlike other forces, did not come from any particular source but was built into the very fabric of space-time. The cosmological constant describes the strength of that force.

When it was discovered that the universe was not static, Einstein eliminated the cosmological constant from his theory and called including it the greatest blunder of his life. But in 1998 observations of very distant supernovas revealed that the universe is expanding at an accelerating rate, an effect that is not possible without some kind of repulsive force acting throughout space. The cosmological constant was resurrected. Since we now know that its value is not zero, the question remains, why does it have the value that it does? Physicists have created arguments explaining how it might arise due to quantum mechanical effects, but the value they calculate is about 120 orders of magnitude (a 1 followed by 120 zeroes) stronger than the actual value, obtained through the supernova observations. That means that either the reasoning employed in the calculation was wrong or else some other effect exists that miraculously cancels all but an unimaginably tiny fraction of the number calculated. The one thing that is certain is that if the value of the cosmological constant were much larger than it is, our universe would have blown itself apart before galaxies could form and-once again-life as we know it would be impossible.

Читать дальше