Alex Marcham - Understanding Infrastructure Edge Computing

Здесь есть возможность читать онлайн «Alex Marcham - Understanding Infrastructure Edge Computing» — ознакомительный отрывок электронной книги совершенно бесплатно, а после прочтения отрывка купить полную версию. В некоторых случаях можно слушать аудио, скачать через торрент в формате fb2 и присутствует краткое содержание. Жанр: unrecognised, на английском языке. Описание произведения, (предисловие) а так же отзывы посетителей доступны на портале библиотеки ЛибКат.

- Название:Understanding Infrastructure Edge Computing

- Автор:

- Жанр:

- Год:неизвестен

- ISBN:нет данных

- Рейтинг книги:4 / 5. Голосов: 1

-

Избранное:Добавить в избранное

- Отзывы:

-

Ваша оценка:

- 80

- 1

- 2

- 3

- 4

- 5

Understanding Infrastructure Edge Computing: краткое содержание, описание и аннотация

Предлагаем к чтению аннотацию, описание, краткое содержание или предисловие (зависит от того, что написал сам автор книги «Understanding Infrastructure Edge Computing»). Если вы не нашли необходимую информацию о книге — напишите в комментариях, мы постараемся отыскать её.

Understanding Infrastructure Edge Computing

infrastructure edge computing

Understanding Infrastructure Edge Computing

Understanding Infrastructure Edge Computing — читать онлайн ознакомительный отрывок

Ниже представлен текст книги, разбитый по страницам. Система сохранения места последней прочитанной страницы, позволяет с удобством читать онлайн бесплатно книгу «Understanding Infrastructure Edge Computing», без необходимости каждый раз заново искать на чём Вы остановились. Поставьте закладку, и сможете в любой момент перейти на страницу, на которой закончили чтение.

Интервал:

Закладка:

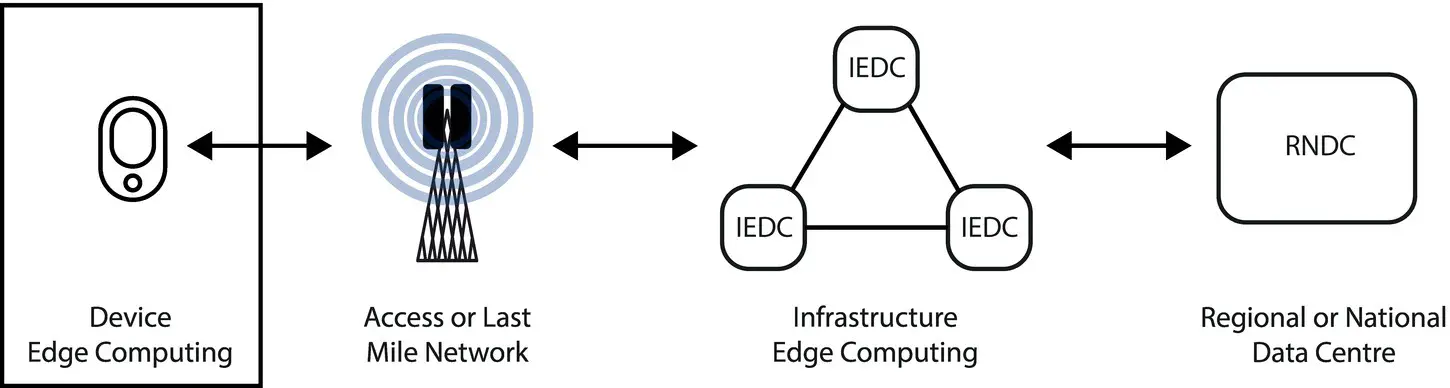

The primary aim of edge computing is to extend compute resources to locations where they are as close as possible to their end users in order to provide enhanced performance and improvements in economics related to large‐scale data transport. The success of cloud computing in reshaping how compute resources are organised, allocated, and consumed over the past decade has driven the use of infrastructure edge computing as the primary method to achieve this goal; the infrastructure edge is where data centre facilities are located which support this usage model, unlike at the device edge.

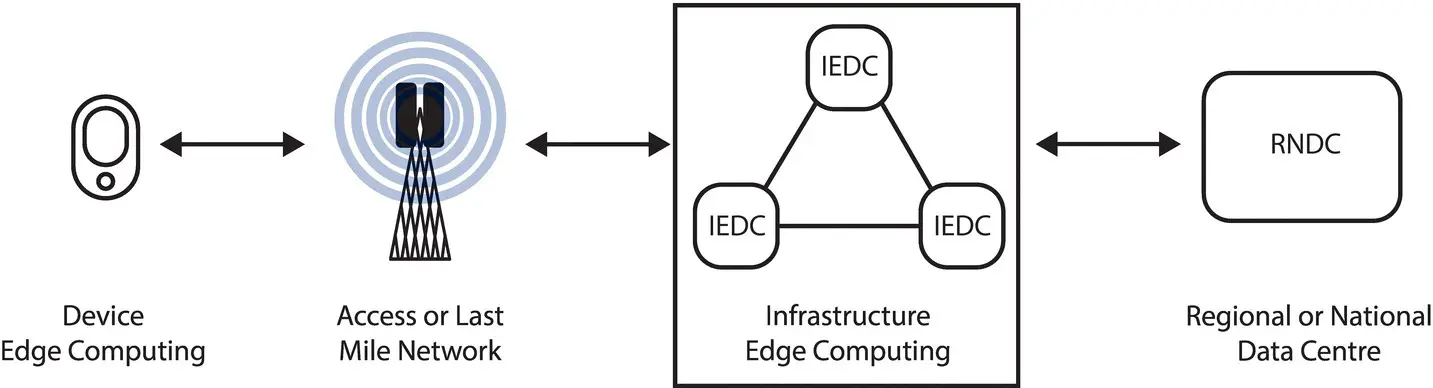

Although it is typically deployed in a small number of large data centres today, the cloud itself is not a physical place. It is a logical entity which is able to utilise compute, storage, and network resources that are distributed across a variety of locations as long as those locations are capable of supporting the type of elastic resource allocation as their hyperscale data centre counterparts. The limited scale of an MMDC compared to a traditional hyperscale facility, where the MMDC represents only a small fraction of the total capacity of that larger facility, can be offset by the deployment of several MMDC facilities across an area with the allocation of only a physically local subset of users to each facility (see Figure 2.1).

2.3.3 Device Edge

The device edge refers to the collection of devices which are located on the device side of the last mile network. Common examples of these entities include smartphones, tablets, home computers, and game consoles; it also includes autonomous vehicles, industrial robotics systems, and devices that function as smart locks, water sensors, or connected thermostats or that can provide many other internet of things (IoT) functionalities. Whether or not a device is part of the device edge is not driven by the size, cost, or computational capabilities of that device but on which side of the last mile network that it operates. This functional division clarifies the basic architecture of an edge computing system and allows several more dimensions such as ownership, device capability, or other factors to be built on top.

Figure 2.1 Infrastructure edge computing in context.

These devices may communicate directly with the infrastructure edge using the last mile network or may use an intermediary device on the device edge such as a gateway to do so. An example of each type of device is a smartphone that has an integrated Long‐Term Evolution (LTE) modem and so is able to communicate directly with the LTE last mile network itself, and a device which instead has only local range Wi‐Fi network connectivity that is used to connect to a gateway which itself has last mile network access.

In comparison to infrastructure edge computing, many devices on the device edge are powered by batteries and subject to other power constraints due to their limited size or mobile nature. It would be possible to design cooperative processing scenarios using only device edge resources in which a device can utilise compute, storage, or network resources from neighbouring devices in an ad hoc fashion; however, for the vast majority of use cases and users, these approaches have proven to be unpopular at best with users not wishing to sacrifice their own limited battery power and processing resources to participate in such a scheme at a large scale outside of outliers such as Folding@home, a distributed computing project that is focused on using a network of mains powered computers, not mobile devices. Bearing this in mind, the need for access to dense compute resources in locations as close as possible to their users is provided to users at the device edge by the infrastructure edge (see Figure 2.2).

Although this book is primarily focused on infrastructure edge computing, topics related to device edge computing will be discussed as appropriate, especially as they relate to the interaction that exists between these two key halves of the edge computing ecosystem and their interoperation.

Figure 2.2 Device edge computing in context.

2.4 A Brief History

As with many technologies, upon close inspection, infrastructure edge computing represents an evolution more than the radical revolution that it may initially appear to be. This does not make it any less significant or impactful; it merely allows us to contextualise infrastructure edge computing within the broader trends which over time have driven much of the development of internet and data centre infrastructure since their inception. This progression lets us understand infrastructure edge computing not as the wild anomaly which it has been portrayed as in the past but as the clear progression of an ongoing theme in network design which has been present for decades and driven by the need to solve both key technical and business challenges using simple and proven principles.

2.4.1 Third Act of the Internet

One framework for understanding the technological progression which has brought us to the point of infrastructure edge computing is the three acts of the internet. This structure distils the evolution of the internet since its inception into three distinct phases, which culminate in the third act of the internet, a state which is driven by new use cases and enabled by infrastructure edge computing.

2.4.1.1 The First Act of the Internet

During the 1970s and 1980s, as the internet began to be available for academic and public use, the types of services it was able to support were basic compared to those which would emerge in the 1990s. Text‐based applications such as bulletin board systems (BBS) and early examples of email represented some of the most complex use cases of the system. With no real‐time element and a simple range of content, the level of centralisation was sufficient to support the small userbase.

It may seem obvious to us in hindsight that the internet would achieve the explosive growth that it has over its lifetime in terms of every possible characteristic from number of users to the volume of data that each individual user would transmit on a daily basis. However, it is a testament to the first principles of the design of the internet that its foundational protocols and technologies have, with the addition of more modern solutions where needed, been able to scale up over time as required.

2.4.1.2 The Second Act of the Internet

During the 1990s and 2000s, internet usage amongst consumers became mainstream as the types of applications and content which the internet supported grew exponentially. The combination of a rapidly growing userbase as millions of people began to connect to the internet for the first time using dial‐up modem connectivity and other technologies such as cable or DSL and the addition of more types of content, as well as far more content being available online in general, began to strain the infrastructure of the internet and led to the development and deployment of the first physical infrastructure solutions, which were designed specifically to address these newly emerging issues.

The widespread advent of cloud computing during the 2010s further exacerbated this trend as new generations of data centre facilities were required globally. As more applications and data began to move from local on‐premises facilities to remote data centres, the locations of these data centres became more important. Cloud providers began to separate their infrastructure on a per‐country basis and, in the case of the United States or other large countries, then began to subdivide their presence within that country into smaller regions, as Amazon Web Services (AWS) has done with their US East and US West regions to optimise performance and the cost of data transportation.

Читать дальшеИнтервал:

Закладка:

Похожие книги на «Understanding Infrastructure Edge Computing»

Представляем Вашему вниманию похожие книги на «Understanding Infrastructure Edge Computing» списком для выбора. Мы отобрали схожую по названию и смыслу литературу в надежде предоставить читателям больше вариантов отыскать новые, интересные, ещё непрочитанные произведения.

Обсуждение, отзывы о книге «Understanding Infrastructure Edge Computing» и просто собственные мнения читателей. Оставьте ваши комментарии, напишите, что Вы думаете о произведении, его смысле или главных героях. Укажите что конкретно понравилось, а что нет, и почему Вы так считаете.